mcp-memory-service

Persistent Shared Memory for AI Agent Pipelines

Open-source memory backend for multi-agent systems.

Agents store decisions, share causal knowledge graphs, and retrieve

context in 5ms — without cloud lock-in or API costs.

Works with LangGraph · CrewAI · AutoGen · any HTTP client · Claude Desktop · OpenCode

🎬 See It in Action

Watch the Web Dashboard Walkthrough on YouTube — Semantic search, tag browser, document ingestion, analytics, quality scoring, and API docs in under 2 minutes.

🌐 Works with claude.ai (Browser)

Unlike desktop-only MCP servers, mcp-memory-service supports Remote MCP for native claude.ai integration.

What this means:

- ✅ Use persistent memory directly in your browser (no Claude Desktop required)

- ✅ Works on any device (laptop, tablet, phone)

- ✅ Enterprise-ready (OAuth 2.0 + HTTPS + CORS)

- ✅ Self-hosted OR cloud-hosted (your choice)

5-Minute Setup:

# 1. Start server with Remote MCP enabled

MCP_STREAMABLE_HTTP_MODE=1 \

MCP_SSE_HOST=0.0.0.0 \

MCP_SSE_PORT=8765 \

MCP_OAUTH_ENABLED=true \

python -m mcp_memory_service.server

# 2. Expose via Cloudflare Tunnel (or your own HTTPS setup)

cloudflared tunnel --url http://localhost:8765

# → Outputs: https://random-name.trycloudflare.com

# 3. In claude.ai: Settings → Connectors → Add Connector

# Paste the URL: https://random-name.trycloudflare.com/mcp

# OAuth flow will handle authentication automatically

Production Setup: See Remote MCP Setup Guide for Let's Encrypt, nginx, and firewall configuration.

Step-by-Step Tutorial: Blog: 5-Minute claude.ai Setup | Wiki Guide

Why Agents Need This

| Without mcp-memory-service | With mcp-memory-service |

|---|

| Each agent run starts from zero | Agents retrieve prior decisions in 5ms |

| Memory is local to one graph/run | Memory is shared across all agents and runs |

| You manage Redis + Pinecone + glue code | One self-hosted service, zero cloud cost |

| No causal relationships between facts | Knowledge graph with typed edges (causes, fixes, contradicts) |

| Context window limits create amnesia | Autonomous consolidation compresses old memories |

Key capabilities for agent pipelines:

- Framework-agnostic REST API — 15 endpoints, no MCP client library needed

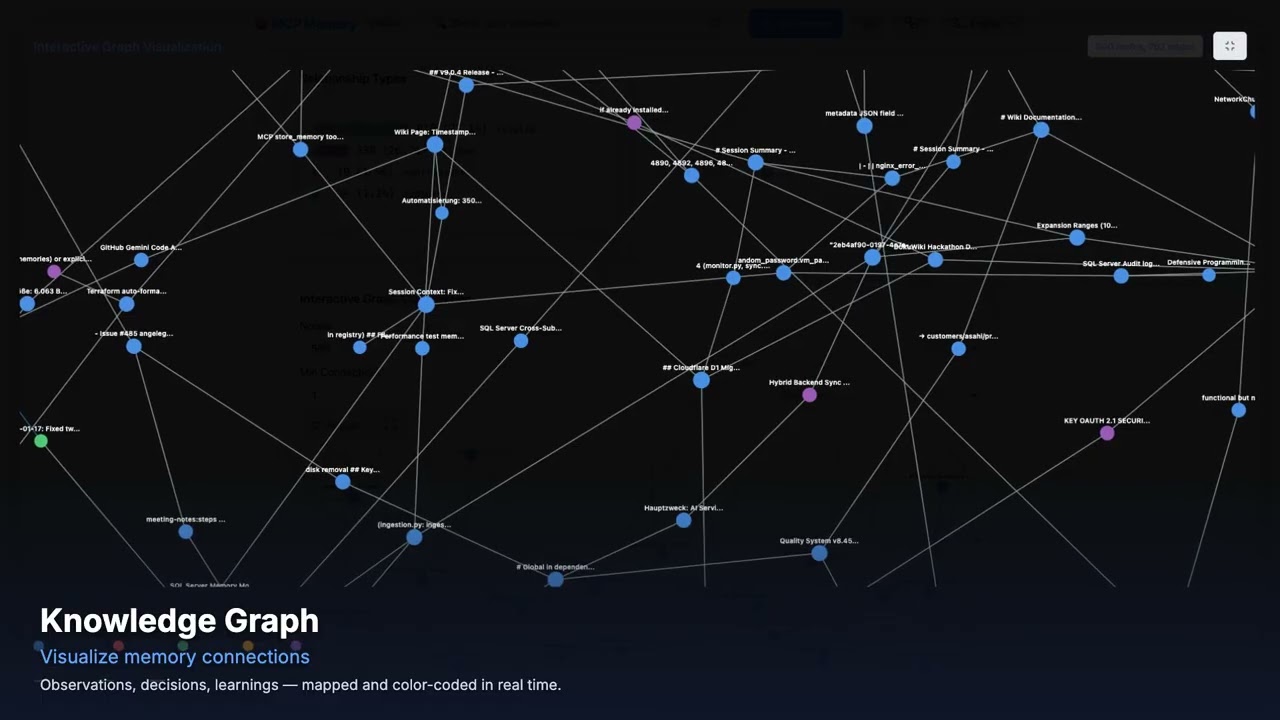

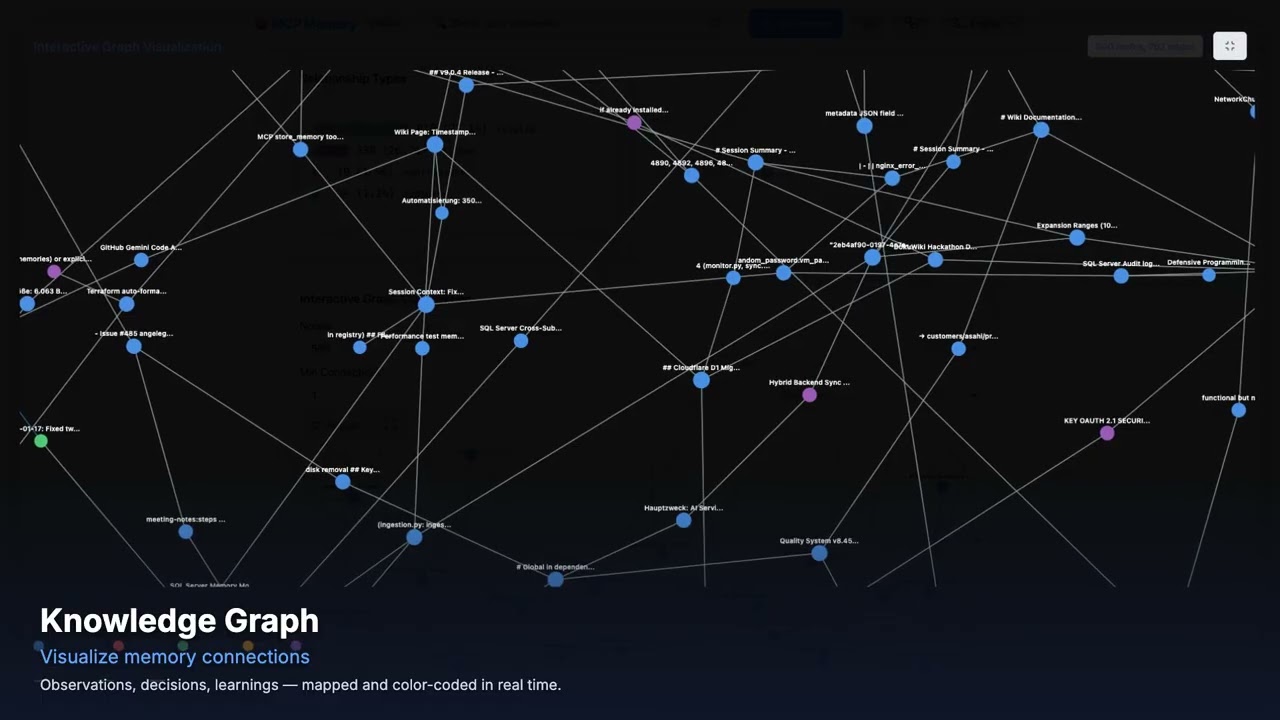

- Knowledge graph — agents share causal chains, not just facts

X-Agent-ID header — auto-tag memories by agent identity for scoped retrievalconversation_id — bypass deduplication for incremental conversation storage- SSE events — real-time notifications when any agent stores or deletes a memory

- Embeddings run locally via ONNX — memory never leaves your infrastructure

Agent Quick Start

pip install mcp-memory-service

MCP_ALLOW_ANONYMOUS_ACCESS=true memory server --http

# REST API running at http://localhost:8000

import httpx

BASE_URL = "http://localhost:8000"